Most lead scoring systems fail not because of bad models, but because they miss intent.

In real-world B2B systems, sales teams often end up chasing the wrong leads — resulting in wasted effort, slower response times, and lost revenue opportunities.

In this article, I’ll show how I applied Google’s small model strategy, inspired by the research paper “Small Models, Big Results: Achieving Superior Intent Extraction through Decomposition” , to build an intent-aware lead scoring system that bridges research and real-world impact.

Why this matters:

Better lead scoring directly impacts:

• Conversion rates

• Sales team efficiency

• Revenue growth

What is a Lead and Why is Lead Scoring Needed?

In marketing, a lead refers to a potential customer who has shown some level of interest and can be passed to the sales team for further conversion.

In B2B businesses, a large number of leads are generated daily through digital platforms such as websites and mobile applications. However, not all leads have the same potential. Some leads are highly likely to convert, especially if engaged early, while others may have low intent or require more nurturing.

For example, if a website generates 100 leads per day, it becomes crucial to decide which leads should be contacted first. This is where lead scoring comes into play. Lead scoring helps prioritize leads based on their likelihood to convert, enabling teams to focus on high-value opportunities and improve overall conversion efficiency.

Paper Summary

Before diving into the solution, let’s briefly understand what this paper proposes.

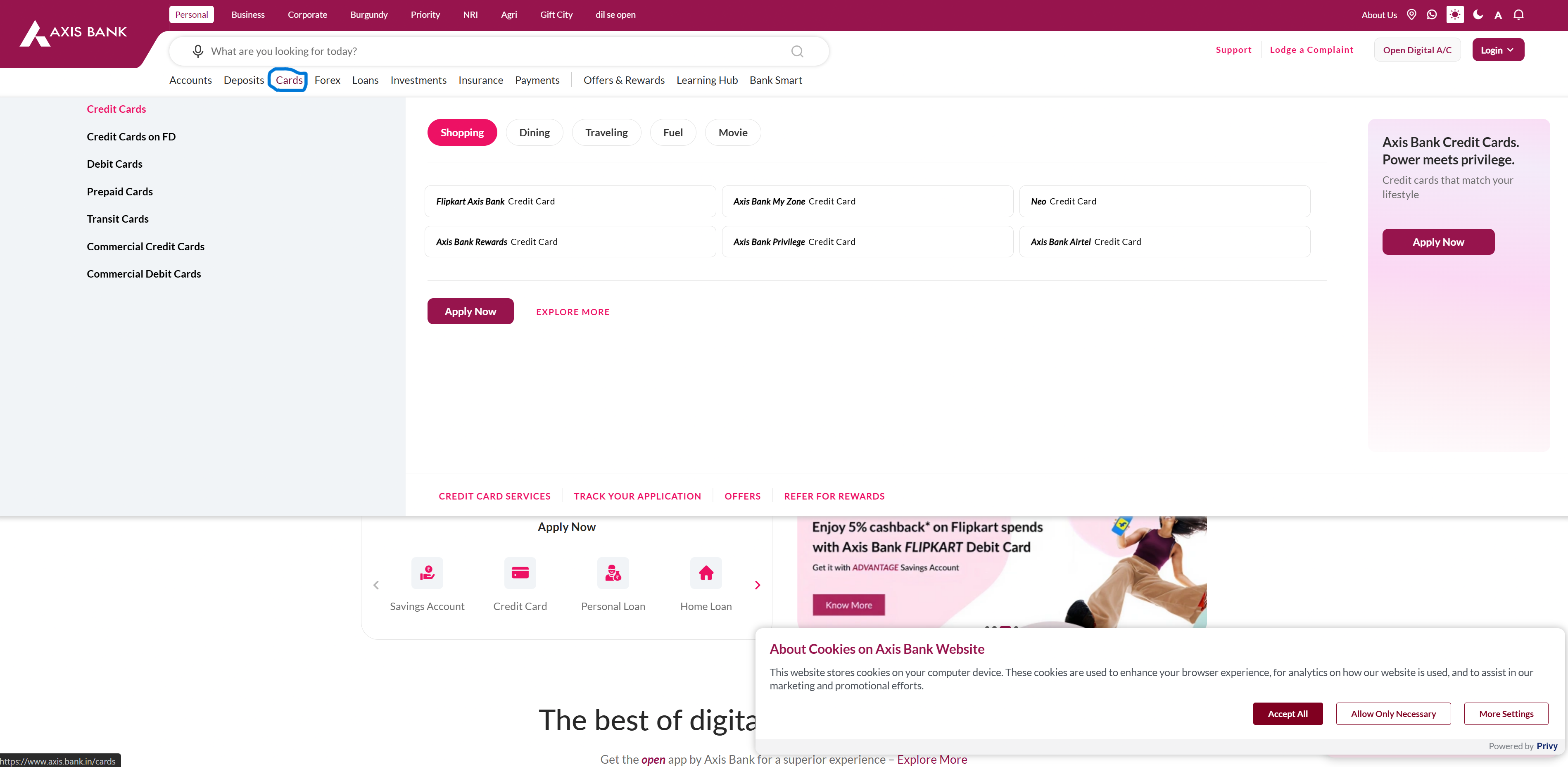

The paper demonstrates how we can infer a user’s intent based on their journey, which is essentially a sequence of interactions and actions performed during a session. For example, a user might start on the homepage, click on the “Cards” section, scroll through the content, and then navigate to the “Contact Us” page. This sequence of actions within a single session is referred to as a user journey.

The key idea is to use this journey data to detect user intent through a two-stage approach.

Stage 1: Interaction Summarization

In Stage 1, the system takes user interactions (actions + screen context) and converts them into human-readable summaries. In simple terms, this is a summarization step where each interaction in the journey is processed independently.

For example, consider the following customer journey:

[Cards] click → [Cards_page] scroll → [know_more] click

We take each step and summarize it using the available signals (such as the UI and the action performed).

Interaction Summary Output

{

'screen_context': [

"Navigation menu with 'Cards' link",

"Sidebar with card categories",

"List of featured credit cards",

"Search bar and Login button"

],

'user_action': 'The user clicked on the cards navigation menu item'

}

Here, the focus is on two things:

- What the user saw (screen context)

- What action the user performed

This structured representation makes it easier to understand behavior. For example, if a user chooses “Cards” out of multiple available options, it indicates a higher likelihood that they are interested in card-related products.

This process is repeated for all steps in the journey to generate a structured summary of the entire session.

Stage 2: Intent Extraction

Once the entire journey is summarized, these summaries are passed to a language model to infer the user’s overall intent.

These two stages, interaction summarization and intent extraction, form the core components of the approach presented in the paper and are the foundation of the system we will design.

Lead Scoring System Design

Now big question is, should we build this system in real-time, or process it after we capture the lead?

Real-time System

In a real-time system, user interactions are processed as they happen, and intent is inferred immediately.

Pros:

- Immediate signals

- Enables in-session nudges

- Useful for personalization

Cons:

- Incomplete context

- No identity early on

- Higher infra complexity

Near Real-Time (Post-Lead)

Alternatively, we can wait until the user is identified and reconstruct their journey before inferring intent.

Pros:

- Complete journey

- Better accuracy

- Easier identity mapping

Cons:

- Delayed insights

- No ability to influence current session

If we look closely both approaches are solving part of the problem, but neither captures the full picture. So we combine both and use hybrid approach such that real-time signals and post-session intelligence combined together to generate confident score

Hybrid System

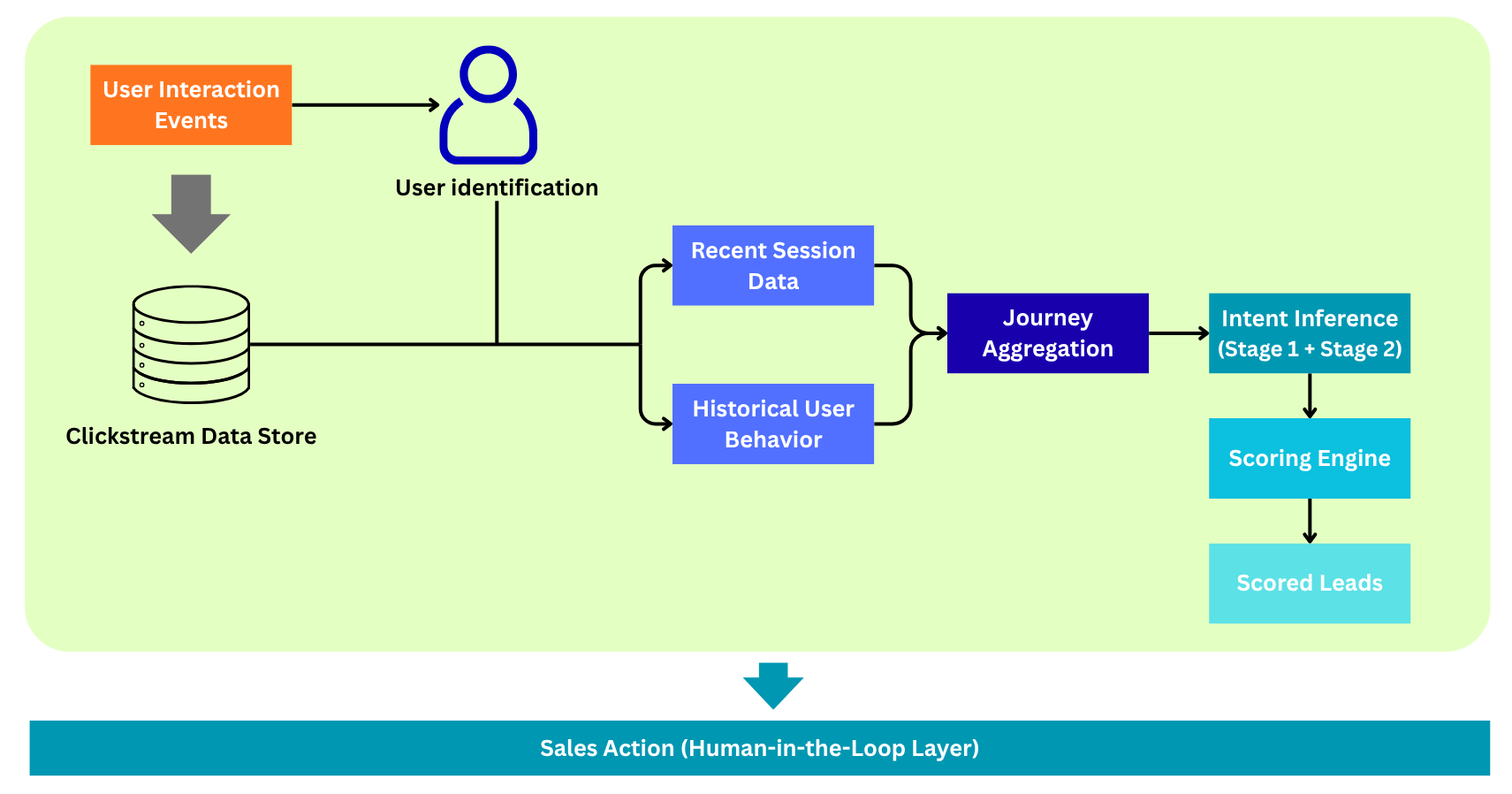

In this system, we separate data collection from intent inference to make the pipeline more efficient and scalable.

During the live session, we do not generate intent in real time. Instead, we capture and store user interactions (clickstream data) as structured events. This allows us to collect a complete view of the user’s journey without making repeated model calls.

Once the user’s identity is captured (e.g., via form submission or login), we retrieve:

- the most recent journey (current or last active session), and

- historical behavior (within a defined time window, such as the last 7 or 30 days).

Using this combined dataset, we perform intent inference in a single batch:

- Stage 1: summarize interactions into structured representations

- Stage 2: infer user intent from the aggregated journey

This approach enables us to generate a more accurate and context-rich understanding of user intent.

Finally, the inferred intent, along with historical behavior and user profile data, is used to compute a lead score.

An important point is that this scoring layer can be implemented in multiple ways. It can leverage LLM-based reasoning or traditional propensity models, which take inputs such as user journey, inferred intent, and historical behavior to produce the final score.

Architecture

Here,

User Interaction Events:

These are the actions performed by users on digital channels such as websites or mobile applications (e.g., clicks, scrolls, navigation).

Clickstream Data Store:

This is where all user interactions are stored along with metadata such as session IDs, timestamps, cookies, and other contextual information.

User Identification:

This acts as the trigger point of the system. When a user performs an action that reveals their identity (e.g., form submission, login, email, or phone number), the next stage of the pipeline is activated.

Recent Session Data:

This represents the most recent journey or session performed by the user, capturing their latest interactions.

Historical User Behavior:

This includes past user interactions within a defined time window (e.g., last 7 days or 30 days), providing a broader view of user engagement.

Journey Aggregation:

In this step, recent and historical journeys are combined and preprocessed. This includes session stitching, ordering events, and filtering relevant interactions.

Intent Inference:

This is the core component of the system. Here, we infer user intent based on both recent behavior and historical patterns using the two-stage approach (summarization + intent extraction).

Scoring Engine:

This component converts inferred intent into a business signal. It can be implemented using traditional propensity models or LLM-based classification to determine whether a user’s interest in a product is high, medium, or low.

Scored Leads:

Leads are assigned scores or labels based on their likelihood to convert. High-intent leads can then be prioritized for follow-up.

Sales Action (Human-in-the-Loop Layer):

This is a critical component of the system. The final outputs are reviewed or acted upon by sales teams, who validate model signals and take appropriate actions such as outreach, follow-ups, or campaign targeting.

Sample Tests

Before diving into the architecture, I conducted a few experiments on sample data to validate the approach.

For this system, I used Google’s Gemini 3 Flash Preview model. However, any multimodal model with similar capabilities can be used.

To simulate real-world behavior, I created sample journey data based on interactions from the Axis Bank website. You can use any dataset relevant to your use case and structure it in a similar format.

Stage 1: Interaction Summarization

In this stage, we follow the approach described in the paper. For each step in the journey, we use the surrounding context (previous and next interactions) along with the current action to generate a structured summary.

result = []

for i, ele in enumerate(sample_data):

step = ele['step']

page = ele['page']

action = ele['action']

element = ele['element']

screenshot = "./data/" + ele['screenshot']

print(step)

# Previous step

if i > 0:

prev = sample_data[i - 1]

prev_page = prev.get('page', 'N/A')

prev_action = prev.get('action', 'N/A')

prev_element = prev.get('element', 'N/A')

else:

prev_page, prev_action, prev_element = "N/A", "N/A", "N/A"

# Next step

if i < len(sample_data) - 1:

nxt = sample_data[i + 1]

next_page = nxt.get('page', 'N/A')

next_action = nxt.get('action', 'N/A')

next_element = nxt.get('element', 'N/A')

else:

next_page, next_action, next_element = "N/A", "N/A", "N/A"

stage1_prompt = f"""You are given a user interaction on a website.

Input:

- Previous Step (optional):

Page: {prev_page}

Action: {prev_action}

Element: {prev_element}

- Current Step:

Screenshot: <image>

Page: {page}

Action: {action}

Element: {element}

- Next Step (optional):

Page: {next_page}

Action: {next_action}

Element: {next_element}

Task:

Extract a structured summary of ONLY the CURRENT step.

Use previous and next steps ONLY as context to better understand the current interaction.

Output format:

<response start>

screen_context: [list of key visible elements]

user_action: what the user did

<response end>

Rules:

- Follow the exact format strictly

- Do NOT output JSON

- screen_context should contain 3–5 concise, relevant items

- Focus ONLY on the current step for output

- Use previous/next steps ONLY to resolve ambiguity

- Do NOT infer intent

- Do NOT add information not grounded in the inputs

- Do NOT include any explanation or extra text outside the response block

"""

res = get_llm_res(stage1_prompt, image_path=screenshot)

result.append((step, res.text))

Stage 2: Intent Extraction

Once we have the summaries for the entire journey, we pass them to the model to infer the overall user intent.

context = ''

for ele in parsed_res:

step = ele['step']

screen_context = ",".join(ele['screen_context'])

user_action = ele['user_action']

context = context + "\n"+ f"step: {step}\nscreen_context: {screen_context}\nuser_action: {user_action}"

stage2_prompt = f"""

You are given a sequence of user interaction summaries from a UI session.

Each step contains:

- screen_context: key elements visible on screen

- user_action: action performed by the user

Your task:

Infer the overall user intent behind this sequence.

Guidelines:

- Base your answer ONLY on the provided summaries

- Do NOT add information that is not present

- The intent should be concise, complete, and reflect the full trajectory

- Do NOT include step-by-step explanation

Input:

{context}

Output:

"""

res = get_llm_res(stage2_prompt)

Finally, the inferred intent is passed to the scoring engine, where it is converted into a lead score or classification label that can be used for downstream decision-making.

Code & Resources

You can download the complete implementation (including the full notebook) from the link below:

Code: Click to download the .zip file

This system enables prioritization of incoming leads based on their likelihood to convert helping reduce response time and improve overall sales efficiency.

If you’re interested in building a similar system or exploring this approach further, feel free to reach out.

LinkedIn: Connect on LinkedIn

Portfolio: veerkhot.com