Recently, while building an end-to-end Agentic AI solution for BFSI, I truly found out the importance of the Human-in-the-Loop layer in Agentic AI architecture. BFSI is a compliance and regulatory-heavy industry where smaller mistakes can lead to huge losses like loss in reputation, monetary loss, etc.

Now, when it comes to Agentic AI, it follows the Reason and Action framework (REACT). So for reasoning, we use LLMs, and for action, we can use anything like an API call or calling a Python function which performs some action.

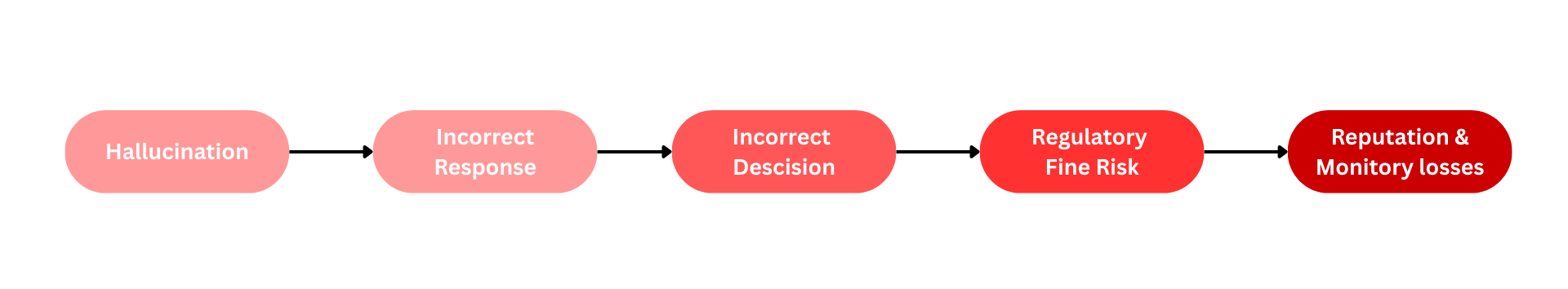

Now, when it comes to the reasoning layer, due to the generative nature of LLMs, we face this problem called hallucination.

Hallucination

It means when LLMs reason or process the instructions passed through the prompt and generate a response, sometimes they misinterpret the given instructions and generate a wrong response very confidently. Though it hardly matters in tasks like general content writing, marketing, etc., it may lead to huge problems for regulatory-heavy domains like BFSI, Legal, etc.

So, does that mean we should stop using LLMs for such problems ?

Short answer is no, we should solve all those problems which require Agentic AI, but while solving those, we should take care of all those edge cases which can lead to regulatory losses. Humans are better at taking decisions for sensitive problems which are regulatory in nature. So in the entire Agentic AI architecture, if there is any kind of human intervention that exists for validation or decision-making, that layer is called the Human-in-the-Loop (HITL) layer.

HITL is Creative part of Agentic AI system

Best system design comes from breaking down a complex system into smaller subproblems which are singular in nature. Once you understand and give the risk weightage to each of these submodules, there lies the answer to where you need the HITL layer. Now, how you break down and decide the solution completely depends on your creativity and problem-solving skills. That’s the reason designing an Agentic AI system where you need HITL to manage these risks properly makes it the creative part of the system design.

For example:

To understand HITL properly, we will use a domain-agnostic example of customer experience. Let’s say we need to implement an Agentic AI system, for example purposes, for the bank credit card product to answer customer queries automatically.

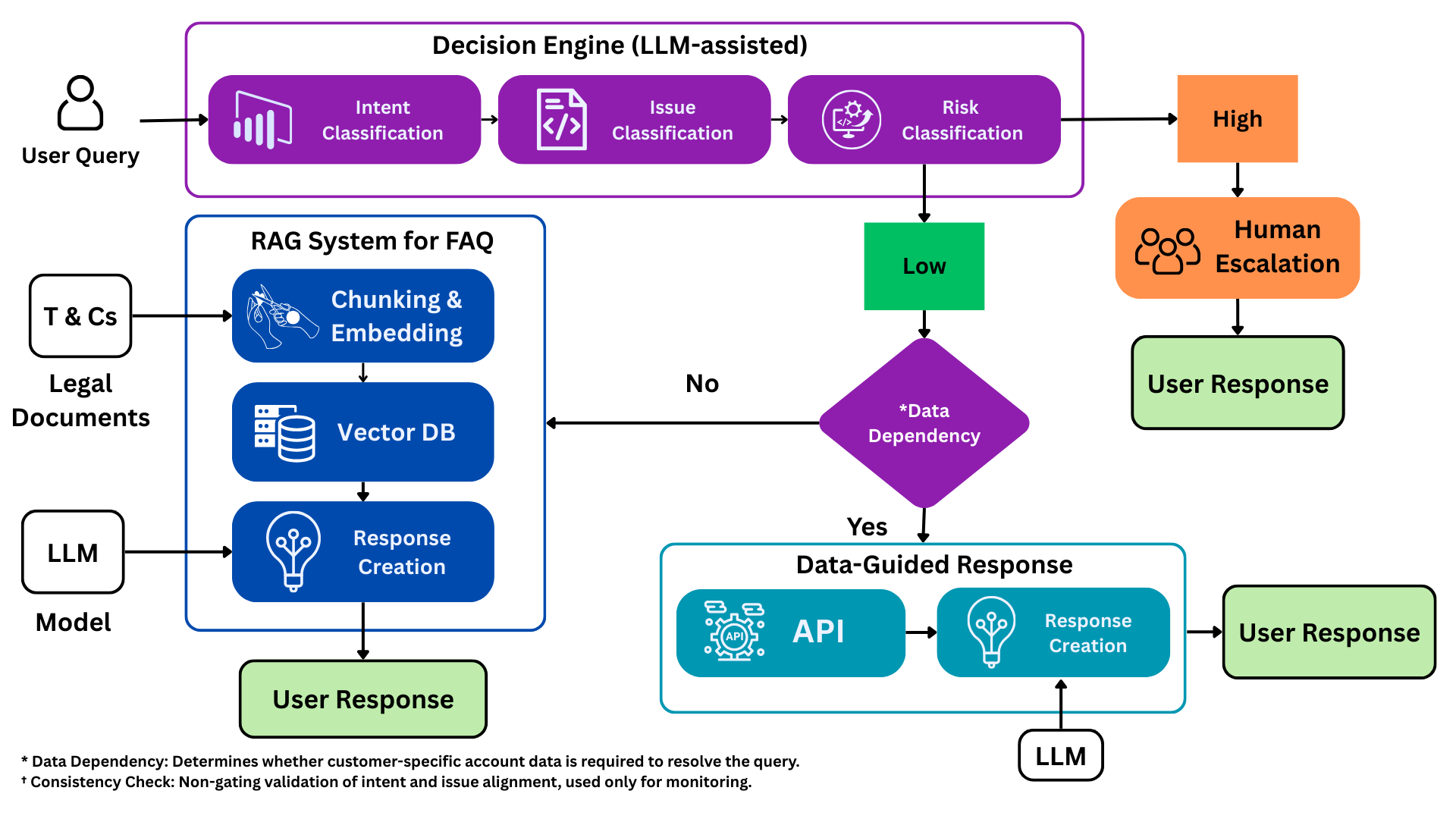

From the above diagram:

We have a decision engine which takes input as a customer query, and the first LLM will understand that customer query and then classify if the customer query is a complaint or inquiry. Once this is done, we get the exact issue name or nature of the issue, whether it is related to payment, billing, account closure, etc., from issue classification.

Then risk classification will tag all the queries related to unauthorized transactions, disputed amounts, incorrect interest charges, etc., as high-risk queries. Anything which is not regulatory-heavy, it will tag as low-risk queries.

These modules should be designed by looking at actual data, but for this example let’s consider we have the actual data with us. So, during EDA we saw more inquiries than complaints. Then, after a further deep dive into the data, we saw some of the complaints are regulatory in nature, meaning risky. Now, naturally, due to the high-risk nature of these complaints, we have designed prompts with lots of diverse sets of examples to classify if a query has high risk associated with it or not. Using different ways like traditional ML models or custom rules, we make sure that most of the high-risk complaints are correctly classified by this layer. We can digest low-risk classified as high-risk, but we should not classify high-risk as low-risk.

After this, we design the decision engine such that we will pass only low-risk complaints to the further layers, and all high-risk queries we will escalate to the right team. Here comes our Human-in-the-Loop layer. If high risk is associated with complaints, those will be processed by human teams rather than AI; this minimizes the risk related to those regulatory complaints.

This kind of architecture does not only help improve response time, it automatically prioritizes the most important complaints which need attention, rather than all queries flowing to the team directly.

Conclusion

We need HITL layers to handle sensitive problems which have high risk attached to them. Using this layer, we can minimize the impact of hallucination and solve these issues with a human touch in a better way. From our example, we can see that in a traditional system all the queries will flow to the human team, and because of this, response time becomes bigger for complaints with higher risk associated with them. Now that all low-risk queries like FAQ questions and low-risk complaints are taken care of by the system, only high-risk related complaints flow through the team, which improves response time and eventually improves customer experience.

← Back to Articles